Search by keywords or author

Journals > > Topics > Imaging Systems and Image Processing

Imaging Systems and Image Processing|42 Article(s)

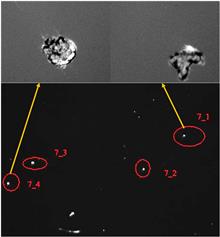

Non-blind super-resolution reconstruction for laser-induced damage dark-field imaging of optical elements

Qian Wang, Fengdong Chen, Yueyue Han, Fa Zeng, Cheng Lu, and Guodong Liu

The laser-induced damage detection images used in high-power laser facilities have a dark background, few textures with sparse and small-sized damage sites, and slight degradation caused by slight defocus and optical diffraction, which make the image superresolution (SR) reconstruction challenging. We propose a non-blind SR reconstruction method by using an exquisite mixing of high-, intermediate-, and low-frequency information at each stage of pixel reconstruction based on UNet. We simplify the channel attention mechanism and activation function to focus on the useful channels and keep the global information in the features. We pay more attention on the damage area in the loss function of our end-to-end deep neural network. For constructing a high-low resolution image pairs data set, we precisely measure the point spread function (PSF) of a low-resolution imaging system by using a Bernoulli calibration pattern; the influence of different distance and lateral position on PSFs is also considered. A high-resolution camera is used to acquire the ground-truth images, which is used to create a low-resolution image pairs data set by convolving with the measured PSFs. Trained on the data set, our network has achieved better results, which proves the effectiveness of our method. The laser-induced damage detection images used in high-power laser facilities have a dark background, few textures with sparse and small-sized damage sites, and slight degradation caused by slight defocus and optical diffraction, which make the image superresolution (SR) reconstruction challenging. We propose a non-blind SR reconstruction method by using an exquisite mixing of high-, intermediate-, and low-frequency information at each stage of pixel reconstruction based on UNet. We simplify the channel attention mechanism and activation function to focus on the useful channels and keep the global information in the features. We pay more attention on the damage area in the loss function of our end-to-end deep neural network. For constructing a high-low resolution image pairs data set, we precisely measure the point spread function (PSF) of a low-resolution imaging system by using a Bernoulli calibration pattern; the influence of different distance and lateral position on PSFs is also considered. A high-resolution camera is used to acquire the ground-truth images, which is used to create a low-resolution image pairs data set by convolving with the measured PSFs. Trained on the data set, our network has achieved better results, which proves the effectiveness of our method.

Chinese Optics Letters

- Publication Date: Apr. 17, 2024

- Vol. 22, Issue 4, 041701 (2024)

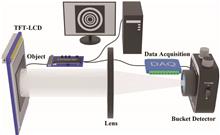

Measurable speckle gradation Hadamard single-pixel imaging

Liyu Zhou, Yanfeng Bai, Qin Fu, Xiaohui Zhu, Xianwei Huang, Xuanpengfan Zou, and Xiquan Fu

The array spatial light field is an effective means for improving imaging speed in single-pixel imaging. However, distinguishing the intensity values of each sub-light field in the array spatial light field requires the help of the array detector or the time-consuming deep-learning algorithm. Aiming at this problem, we propose measurable speckle gradation Hadamard single-pixel imaging (MSG-HSI), which makes most of the refresh mechanism of the device generate the Hadamard speckle patterns and the high sampling rate of the bucket detector and is capable of measuring the light intensity fluctuation of the array spatial light field only by a simple bucket detector. The numerical and experimental results indicate that data acquisition in MSG-HSI is 4 times faster than in traditional Hadamard single-pixel imaging. Moreover, imaging quality in MSG-HSI can be further improved by image stitching technology. Our approach may open a new perspective for single-pixel imaging to improve imaging speed. The array spatial light field is an effective means for improving imaging speed in single-pixel imaging. However, distinguishing the intensity values of each sub-light field in the array spatial light field requires the help of the array detector or the time-consuming deep-learning algorithm. Aiming at this problem, we propose measurable speckle gradation Hadamard single-pixel imaging (MSG-HSI), which makes most of the refresh mechanism of the device generate the Hadamard speckle patterns and the high sampling rate of the bucket detector and is capable of measuring the light intensity fluctuation of the array spatial light field only by a simple bucket detector. The numerical and experimental results indicate that data acquisition in MSG-HSI is 4 times faster than in traditional Hadamard single-pixel imaging. Moreover, imaging quality in MSG-HSI can be further improved by image stitching technology. Our approach may open a new perspective for single-pixel imaging to improve imaging speed.

Chinese Optics Letters

- Publication Date: Mar. 25, 2024

- Vol. 22, Issue 3, 031104 (2024)

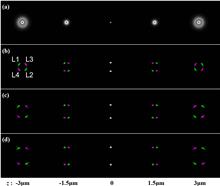

Geometric phase helical PSF for simultaneous orientation and 3D localization microscopy

Yongzhuang Zhou, Hongshuo Zhang, Yong Shen, Andrew R. Harvey, and Hongxin Zou

The 3D location and dipole orientation of light emitters provide essential information in many biological, chemical, and physical systems. Simultaneous acquisition of both information types typically requires pupil engineering for 3D localization and dual-channel polarization splitting for orientation deduction. Here we report a geometric phase helical point spread function for simultaneously estimating the 3D position and dipole orientation of point emitters. It has a compact and simpler optical configuration compared to polarization-splitting techniques and yields achromatic phase modulation in contrast to pupil engineering based on dynamic phase, showing great potential for single-molecule orientation and localization microscopy. The 3D location and dipole orientation of light emitters provide essential information in many biological, chemical, and physical systems. Simultaneous acquisition of both information types typically requires pupil engineering for 3D localization and dual-channel polarization splitting for orientation deduction. Here we report a geometric phase helical point spread function for simultaneously estimating the 3D position and dipole orientation of point emitters. It has a compact and simpler optical configuration compared to polarization-splitting techniques and yields achromatic phase modulation in contrast to pupil engineering based on dynamic phase, showing great potential for single-molecule orientation and localization microscopy.

Chinese Optics Letters

- Publication Date: Mar. 25, 2024

- Vol. 22, Issue 3, 031103 (2024)

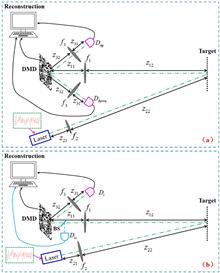

Enhancing the ability of single-pixel imaging against the source’s energy fluctuation by complementary detection

Junjie Cai, and Wenlin Gong

The source’s energy fluctuation has a great effect on the quality of single-pixel imaging (SPI). When the method of complementary detection is introduced into an SPI camera system and the echo signal is corrected with the summation of the light intensities recorded by two complementary detectors, we demonstrate, by both experiments and simulations, that complementary single-pixel imaging (CSPI) is robust to the source’s energy fluctuation. The superiority of the CSPI structure is also discussed in comparison with previous SPI via signal monitoring. The source’s energy fluctuation has a great effect on the quality of single-pixel imaging (SPI). When the method of complementary detection is introduced into an SPI camera system and the echo signal is corrected with the summation of the light intensities recorded by two complementary detectors, we demonstrate, by both experiments and simulations, that complementary single-pixel imaging (CSPI) is robust to the source’s energy fluctuation. The superiority of the CSPI structure is also discussed in comparison with previous SPI via signal monitoring.

Chinese Optics Letters

- Publication Date: Mar. 22, 2024

- Vol. 22, Issue 3, 031101 (2024)

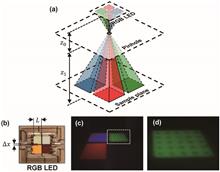

High-resolution portable lens-free on-chip microscopy with RGB LED via pinhole array

Qinhua Wang, Jianshe Ma, Liangcai Cao, and Ping Su

Lens-free on-chip microscopy with RGB LEDs (LFOCM-RGB) provides a portable, cost-effective, and high-throughput imaging tool for resource-limited environments. However, the weak coherence of LEDs limits the high-resolution imaging, and the luminous surfaces of the LED chips on the RGB LED do not overlap, making the coherence-enhanced executions tend to undermine the portable and cost-effective implementation. Here, we propose a specially designed pinhole array to enhance coherence in a portable and cost-effective implementation. It modulates the three-color beams from the RGB LED separately so that the three-color beams effectively overlap on the sample plane while reducing the effective light-emitting area for better spatial coherence. The separate modulation of the spatial coherence allows the temporal coherence to be modulated separately by single spectral filters rather than by expensive triple spectral filters. Based on the pinhole array, the LFOCM-RGB simply and effectively realizes the high-resolution imaging in a portable and cost-effective implementation, offering much flexibility for various applications in resource-limited environments. Lens-free on-chip microscopy with RGB LEDs (LFOCM-RGB) provides a portable, cost-effective, and high-throughput imaging tool for resource-limited environments. However, the weak coherence of LEDs limits the high-resolution imaging, and the luminous surfaces of the LED chips on the RGB LED do not overlap, making the coherence-enhanced executions tend to undermine the portable and cost-effective implementation. Here, we propose a specially designed pinhole array to enhance coherence in a portable and cost-effective implementation. It modulates the three-color beams from the RGB LED separately so that the three-color beams effectively overlap on the sample plane while reducing the effective light-emitting area for better spatial coherence. The separate modulation of the spatial coherence allows the temporal coherence to be modulated separately by single spectral filters rather than by expensive triple spectral filters. Based on the pinhole array, the LFOCM-RGB simply and effectively realizes the high-resolution imaging in a portable and cost-effective implementation, offering much flexibility for various applications in resource-limited environments.

Chinese Optics Letters

- Publication Date: Feb. 20, 2024

- Vol. 22, Issue 2, 021101 (2024)

Differential interference contrast phase edging net: an all-optical learning system for edge detection of phase objects

Yiming Li, Ran Li, Quan Chen, Haitao Luan, Haijun Lu, Hui Yang, Min Gu, and Qiming Zhang

Edge detection for low-contrast phase objects cannot be performed directly by the spatial difference of intensity distribution. In this work, an all-optical diffractive neural network (DPENet) based on the differential interference contrast principle to detect the edges of phase objects in an all-optical manner is proposed. Edge information is encoded into an interference light field by dual Wollaston prisms without lenses and light-speed processed by the diffractive neural network to obtain the scale-adjustable edges. Simulation results show that DPENet achieves F-scores of 0.9308 (MNIST) and 0.9352 (NIST) and enables real-time edge detection of biological cells, achieving an F-score of 0.7462. Edge detection for low-contrast phase objects cannot be performed directly by the spatial difference of intensity distribution. In this work, an all-optical diffractive neural network (DPENet) based on the differential interference contrast principle to detect the edges of phase objects in an all-optical manner is proposed. Edge information is encoded into an interference light field by dual Wollaston prisms without lenses and light-speed processed by the diffractive neural network to obtain the scale-adjustable edges. Simulation results show that DPENet achieves F-scores of 0.9308 (MNIST) and 0.9352 (NIST) and enables real-time edge detection of biological cells, achieving an F-score of 0.7462.

Chinese Optics Letters

- Publication Date: Jan. 08, 2024

- Vol. 22, Issue 1, 011102 (2024)

Optimizing depth of field in 3D light-field display by analyzing and controlling light-beam divergence angle

Xunbo Yu, Yiping Wang, Xin Gao, Hanyu Li, Kexin Liu, Binbin Yan, and Xinzhu Sang

A concept of divergence angle of light beams (DALB) is proposed to analyze the depth of field (DOF) of a 3D light-field display system. The mathematical model between DOF and DALB is established, and the conclusion that DOF and DALB are inversely proportional is drawn. To reduce DALB and generate clear depth perception, a triple composite aspheric lens structure with a viewing angle of 100° is designed and experimentally demonstrated. The DALB-constrained 3D light-field display system significantly improves the clarity of 3D images and also performs well in imaging at a 3D scene with a DOF over 30 cm. A concept of divergence angle of light beams (DALB) is proposed to analyze the depth of field (DOF) of a 3D light-field display system. The mathematical model between DOF and DALB is established, and the conclusion that DOF and DALB are inversely proportional is drawn. To reduce DALB and generate clear depth perception, a triple composite aspheric lens structure with a viewing angle of 100° is designed and experimentally demonstrated. The DALB-constrained 3D light-field display system significantly improves the clarity of 3D images and also performs well in imaging at a 3D scene with a DOF over 30 cm.

Chinese Optics Letters

- Publication Date: Jan. 08, 2024

- Vol. 22, Issue 1, 011101 (2024)

Underwater ghost imaging with pseudo-Bessel-ring modulation pattern|Editors' Pick

Zhe Sun, Tong Tian, Sukyoon Oh, Jiang Wang, Guanghua Cheng, and Xuelong Li

In this study, we propose an underwater ghost-imaging scheme using a modulation pattern combining offset-position pseudo-Bessel-ring (OPBR) and random binary (RB) speckle pattern illumination. We design the experiments based on modulation rules to order the OPBR speckle patterns. We retrieve ghost images by OPBR beam with different modulation speckle sizes. The obtained ghost images have a better contrast-to-noise rate compared to RB beam ghost imaging under the same conditions. We verify the results both in the experiment and simulation. In addition, we also check the image quality at different turbidities. Furthermore, we demonstrate that the OPBR speckle pattern also provides better image quality in other objects. The proposed method promises wide applications in highly scattering media, atmosphere, turbid water, etc. In this study, we propose an underwater ghost-imaging scheme using a modulation pattern combining offset-position pseudo-Bessel-ring (OPBR) and random binary (RB) speckle pattern illumination. We design the experiments based on modulation rules to order the OPBR speckle patterns. We retrieve ghost images by OPBR beam with different modulation speckle sizes. The obtained ghost images have a better contrast-to-noise rate compared to RB beam ghost imaging under the same conditions. We verify the results both in the experiment and simulation. In addition, we also check the image quality at different turbidities. Furthermore, we demonstrate that the OPBR speckle pattern also provides better image quality in other objects. The proposed method promises wide applications in highly scattering media, atmosphere, turbid water, etc.

Chinese Optics Letters

- Publication Date: Aug. 02, 2023

- Vol. 21, Issue 8, 081101 (2023)

A detail-enhanced sampling strategy in Hadamard single-pixel imaging|Editors' Pick

Yan Cai, Shijian Li, Wei Zhang, Hao Wu, Xuri Yao, and Qing Zhao

Hadamard single-pixel imaging is an appealing imaging technique due to its features of low hardware complexity and industrial cost. To improve imaging efficiency, many studies have focused on sorting Hadamard patterns to obtain reliable reconstructed images with very few samples. In this study, we propose an efficient Hadamard basis sampling strategy that employs an exponential probability function to sample Hadamard patterns in a direction with high energy concentration of the Hadamard spectrum. We used the compressed-sensing algorithm for image reconstruction. The simulation and experimental results show that this sampling strategy can reconstruct object reliably and preserves the edge and details of images. Hadamard single-pixel imaging is an appealing imaging technique due to its features of low hardware complexity and industrial cost. To improve imaging efficiency, many studies have focused on sorting Hadamard patterns to obtain reliable reconstructed images with very few samples. In this study, we propose an efficient Hadamard basis sampling strategy that employs an exponential probability function to sample Hadamard patterns in a direction with high energy concentration of the Hadamard spectrum. We used the compressed-sensing algorithm for image reconstruction. The simulation and experimental results show that this sampling strategy can reconstruct object reliably and preserves the edge and details of images.

Chinese Optics Letters

- Publication Date: Jul. 24, 2023

- Vol. 21, Issue 7, 071101 (2023)

Passive non-line-of-sight imaging for moving targets with an event camera

Conghe Wang, Yutong He, Xia Wang, Honghao Huang, Changda Yan, Xin Zhang, and Hongwei Chen

Non-line-of-sight (NLOS) imaging is an emerging technique for detecting objects behind obstacles or around corners. Recent studies on passive NLOS mainly focus on steady-state measurement and reconstruction methods, which show limitations in recognition of moving targets. To the best of our knowledge, we propose a novel event-based passive NLOS imaging method. We acquire asynchronous event-based data of the diffusion spot on the relay surface, which contains detailed dynamic information of the NLOS target, and efficiently ease the degradation caused by target movement. In addition, we demonstrate the event-based cues based on the derivation of an event-NLOS forward model. Furthermore, we propose the first event-based NLOS imaging data set, EM-NLOS, and the movement feature is extracted by time-surface representation. We compare the reconstructions through event-based data with frame-based data. The event-based method performs well on peak signal-to-noise ratio and learned perceptual image patch similarity, which is 20% and 10% better than the frame-based method. Non-line-of-sight (NLOS) imaging is an emerging technique for detecting objects behind obstacles or around corners. Recent studies on passive NLOS mainly focus on steady-state measurement and reconstruction methods, which show limitations in recognition of moving targets. To the best of our knowledge, we propose a novel event-based passive NLOS imaging method. We acquire asynchronous event-based data of the diffusion spot on the relay surface, which contains detailed dynamic information of the NLOS target, and efficiently ease the degradation caused by target movement. In addition, we demonstrate the event-based cues based on the derivation of an event-NLOS forward model. Furthermore, we propose the first event-based NLOS imaging data set, EM-NLOS, and the movement feature is extracted by time-surface representation. We compare the reconstructions through event-based data with frame-based data. The event-based method performs well on peak signal-to-noise ratio and learned perceptual image patch similarity, which is 20% and 10% better than the frame-based method.

Chinese Optics Letters

- Publication Date: May. 26, 2023

- Vol. 21, Issue 6, 061103 (2023)

Topics